什么是 Langfuse? Langfuse 是一个开源的 LLM 工程平台,帮助团队追踪 API 调用、监控性能,并调试 AI 应用中的问题。

追踪 LangChain

Langfuse 追踪通过 LangChain Callbacks(Python、JS)与 LangChain 集成。因此,Langfuse SDK 会自动为 LangChain 应用的每次运行创建嵌套追踪,让您可以记录、分析和调试 LangChain 应用。 您可以通过(1)构造函数参数或(2)环境变量来配置集成。在 cloud.langfuse.com 注册或自托管 Langfuse 以获取凭据。构造函数参数

环境变量

追踪 LangGraph

本节演示如何使用 Langfuse 通过 LangChain 集成调试、分析和迭代 LangGraph 应用。初始化 langfuse

注意: 您需要运行至少 Python 3.11(GitHub Issue)。 使用项目设置中 Langfuse UI 的 API 密钥初始化 Langfuse 客户端,并将其添加到您的环境中。使用 LangGraph 构建简单对话应用

本节将完成以下内容:- 使用 LangGraph 构建一个可回答常见问题的支持聊天机器人

- 使用 Langfuse 追踪聊天机器人的输入和输出

创建 Agent

首先创建一个StateGraph。StateGraph 对象将我们的聊天机器人结构定义为状态机。我们将添加节点来表示 LLM 和聊天机器人可调用的函数,以及边来指定机器人如何在这些函数之间转换。

将 langfuse 作为回调添加到调用中

现在,我们将 LangChain 的 Langfuse 回调处理器 添加到追踪中:config={"callbacks": [langfuse_handler]}

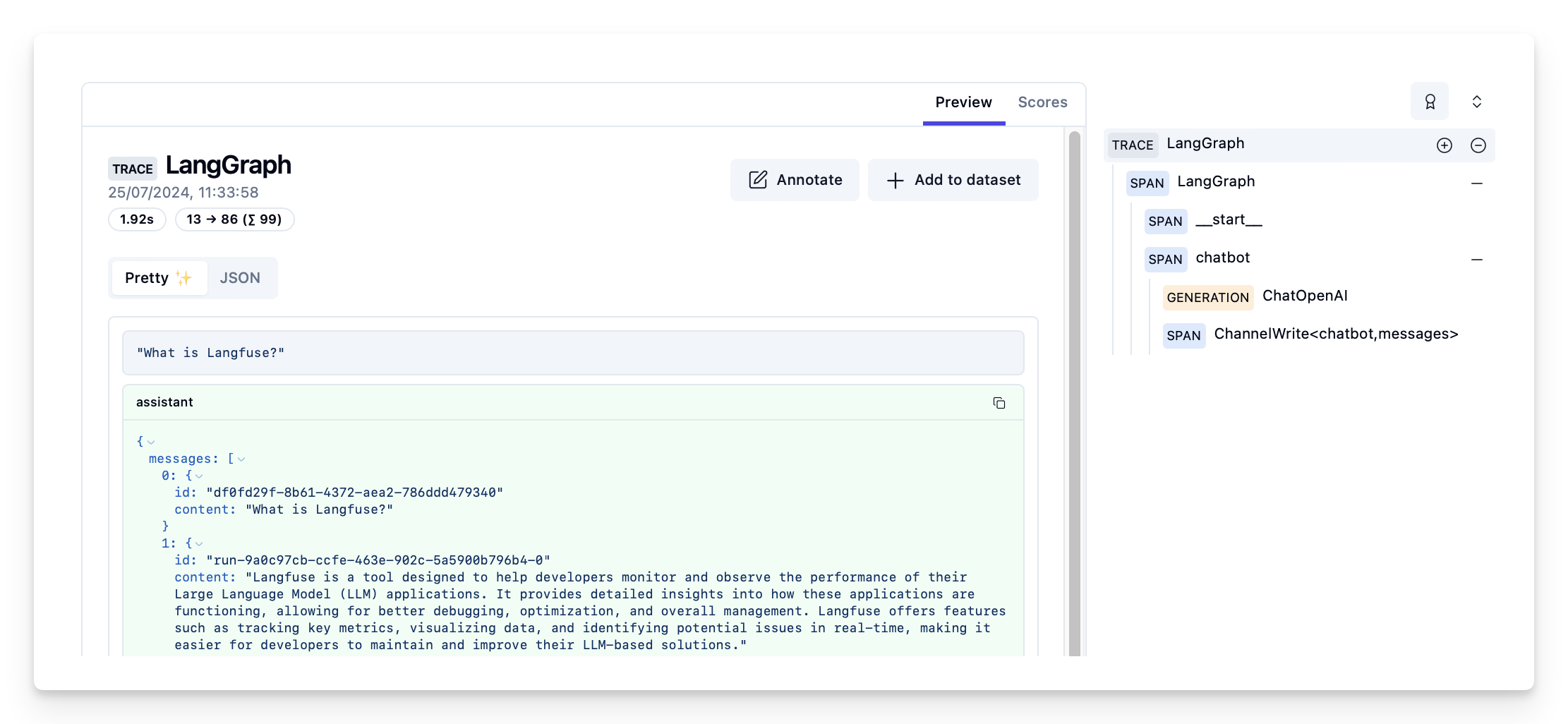

在 langfuse 中查看追踪

Langfuse 中的示例追踪:https://cloud.langfuse.com/project/cloramnkj0002jz088vzn1ja4/traces/d109e148-d188-4d6e-823f-aac0864afbab

- 查看完整 notebook 获取更多示例。

- 要了解如何评估 LangGraph 应用的性能,请查看 LangGraph 评估指南。

将这些文档连接 到 Claude、VSCode 等,通过 MCP 获取实时答案。