Argilla 是一个面向 LLM 的开源数据整理平台。 借助 Argilla,每个人都可以通过人工和机器反馈加速数据整理, 从而构建出更健壮的语言模型。我们为 MLOps 生命周期中的每个步骤提供支持, 从数据标注到模型监控。

ArgillaCallbackHandler 追踪 LLM 的输入和响应,以在 Argilla 中生成数据集。

追踪 LLM 的输入和输出以生成用于未来微调的数据集非常有用。当您使用 LLM 为特定任务生成数据时(例如问答、摘要或翻译),这一点尤为重要。

安装与配置

Copy

pip install -qU langchain langchain-openai argilla

获取 API 凭据

按照以下步骤获取 Argilla API 凭据:- 前往您的 Argilla 界面。

- 点击头像,进入”我的设置”。

- 复制 API Key。

Copy

import os

os.environ["ARGILLA_API_URL"] = "..."

os.environ["ARGILLA_API_KEY"] = "..."

os.environ["OPENAI_API_KEY"] = "..."

配置 Argilla

要使用ArgillaCallbackHandler,我们需要在 Argilla 中创建一个新的 FeedbackDataset 来追踪您的 LLM 实验。请使用以下代码:

Copy

import argilla as rg

Copy

from packaging.version import parse as parse_version

if parse_version(rg.__version__) < parse_version("1.8.0"):

raise RuntimeError(

"`FeedbackDataset` is only available in Argilla v1.8.0 or higher, please "

"upgrade `argilla` as `pip install argilla --upgrade`."

)

Copy

dataset = rg.FeedbackDataset(

fields=[

rg.TextField(name="prompt"),

rg.TextField(name="response"),

],

questions=[

rg.RatingQuestion(

name="response-rating",

description="How would you rate the quality of the response?",

values=[1, 2, 3, 4, 5],

required=True,

),

rg.TextQuestion(

name="response-feedback",

description="What feedback do you have for the response?",

required=False,

),

],

guidelines="You're asked to rate the quality of the response and provide feedback.",

)

rg.init(

api_url=os.environ["ARGILLA_API_URL"],

api_key=os.environ["ARGILLA_API_KEY"],

)

dataset.push_to_argilla("langchain-dataset")

📌 注意:目前,FeedbackDataset.fields仅支持提示-响应对,因此ArgillaCallbackHandler只会追踪提示(即 LLM 输入)和响应(即 LLM 输出)。

追踪

要使用ArgillaCallbackHandler,您可以使用以下代码,或参考下文中的示例场景。

Copy

from langchain_community.callbacks.argilla_callback import ArgillaCallbackHandler

argilla_callback = ArgillaCallbackHandler(

dataset_name="langchain-dataset",

api_url=os.environ["ARGILLA_API_URL"],

api_key=os.environ["ARGILLA_API_KEY"],

)

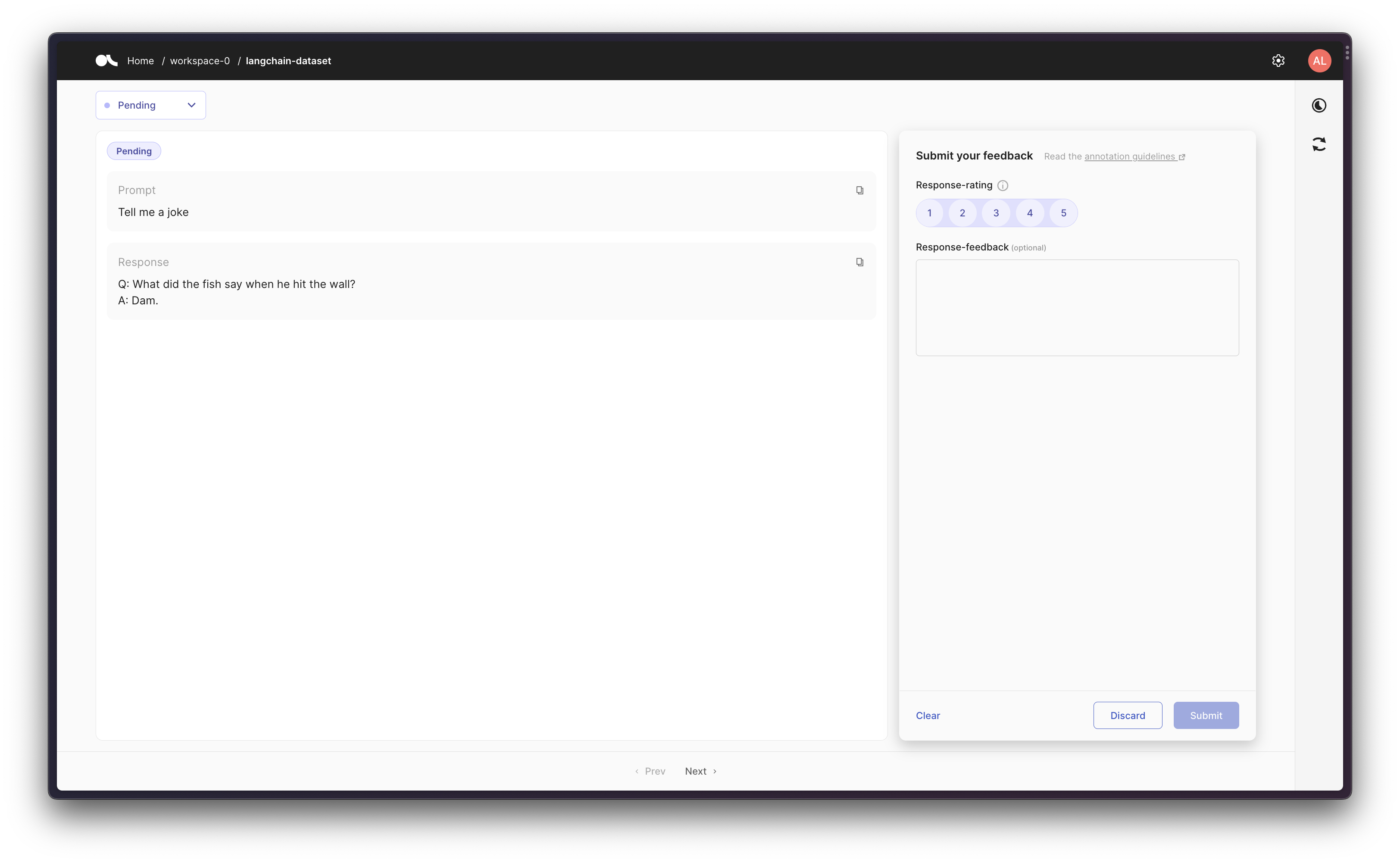

场景 1:追踪 LLM

首先,让我们单独运行一个 LLM 几次,并将生成的提示-响应对记录到 Argilla。Copy

from langchain_core.callbacks.stdout import StdOutCallbackHandler

from langchain_openai import OpenAI

argilla_callback = ArgillaCallbackHandler(

dataset_name="langchain-dataset",

api_url=os.environ["ARGILLA_API_URL"],

api_key=os.environ["ARGILLA_API_KEY"],

)

callbacks = [StdOutCallbackHandler(), argilla_callback]

llm = OpenAI(temperature=0.9, callbacks=callbacks)

llm.generate(["Tell me a joke", "Tell me a poem"] * 3)

Copy

LLMResult(generations=[[Generation(text='\n\nQ: What did the fish say when he hit the wall? \nA: Dam.', generation_info={'finish_reason': 'stop', 'logprobs': None})], [Generation(text='\n\nThe Moon \n\nThe moon is high in the midnight sky,\nSparkling like a star above.\nThe night so peaceful, so serene,\nFilling up the air with love.\n\nEver changing and renewing,\nA never-ending light of grace.\nThe moon remains a constant view,\nA reminder of life’s gentle pace.\n\nThrough time and space it guides us on,\nA never-fading beacon of hope.\nThe moon shines down on us all,\nAs it continues to rise and elope.', generation_info={'finish_reason': 'stop', 'logprobs': None})], [Generation(text='\n\nQ. What did one magnet say to the other magnet?\nA. "I find you very attractive!"', generation_info={'finish_reason': 'stop', 'logprobs': None})], [Generation(text="\n\nThe world is charged with the grandeur of God.\nIt will flame out, like shining from shook foil;\nIt gathers to a greatness, like the ooze of oil\nCrushed. Why do men then now not reck his rod?\n\nGenerations have trod, have trod, have trod;\nAnd all is seared with trade; bleared, smeared with toil;\nAnd wears man's smudge and shares man's smell: the soil\nIs bare now, nor can foot feel, being shod.\n\nAnd for all this, nature is never spent;\nThere lives the dearest freshness deep down things;\nAnd though the last lights off the black West went\nOh, morning, at the brown brink eastward, springs —\n\nBecause the Holy Ghost over the bent\nWorld broods with warm breast and with ah! bright wings.\n\n~Gerard Manley Hopkins", generation_info={'finish_reason': 'stop', 'logprobs': None})], [Generation(text='\n\nQ: What did one ocean say to the other ocean?\nA: Nothing, they just waved.', generation_info={'finish_reason': 'stop', 'logprobs': None})], [Generation(text="\n\nA poem for you\n\nOn a field of green\n\nThe sky so blue\n\nA gentle breeze, the sun above\n\nA beautiful world, for us to love\n\nLife is a journey, full of surprise\n\nFull of joy and full of surprise\n\nBe brave and take small steps\n\nThe future will be revealed with depth\n\nIn the morning, when dawn arrives\n\nA fresh start, no reason to hide\n\nSomewhere down the road, there's a heart that beats\n\nBelieve in yourself, you'll always succeed.", generation_info={'finish_reason': 'stop', 'logprobs': None})]], llm_output={'token_usage': {'completion_tokens': 504, 'total_tokens': 528, 'prompt_tokens': 24}, 'model_name': 'text-davinci-003'})

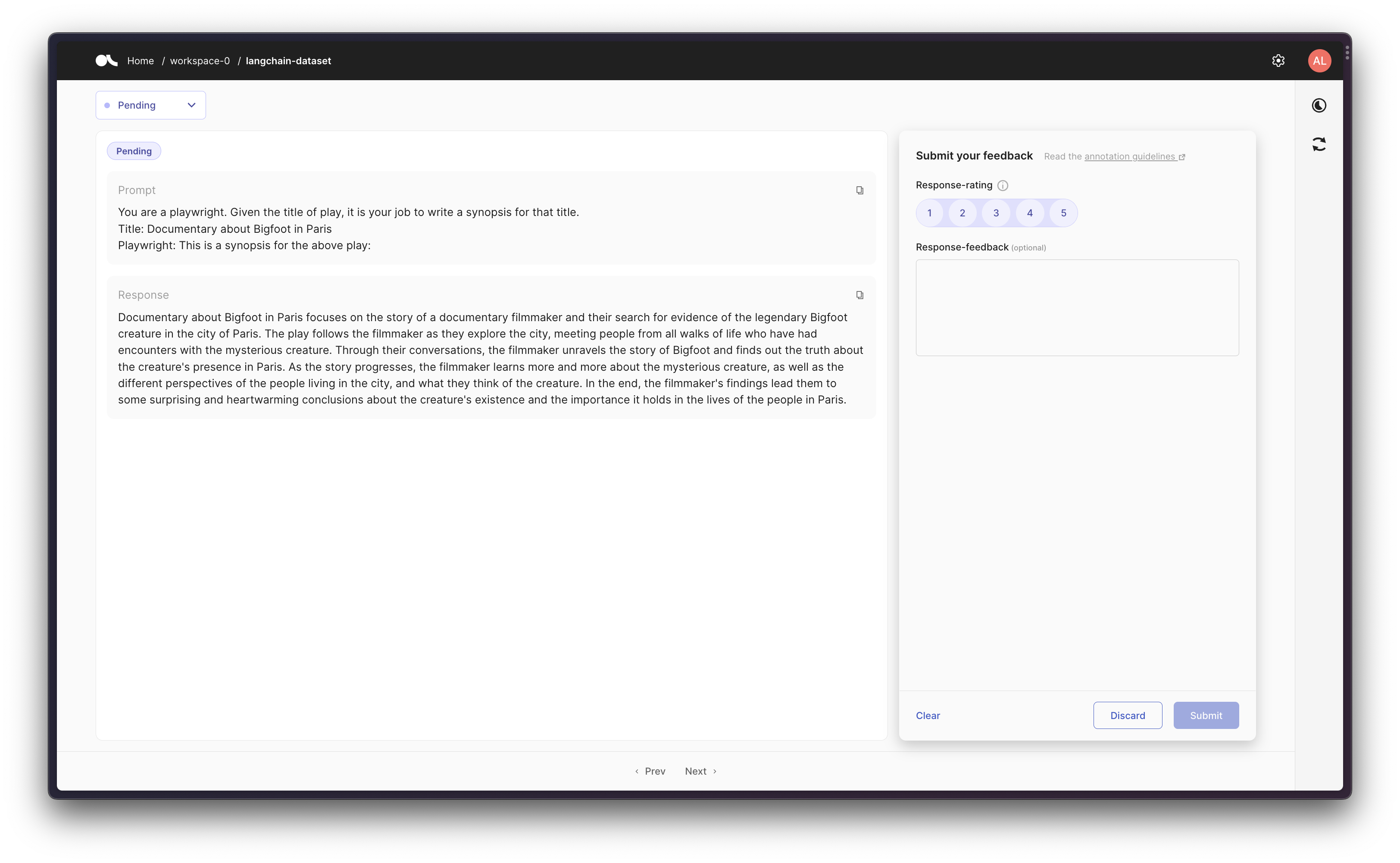

场景 2:在 Chain 中追踪 LLM

接下来,我们可以使用提示模板创建一个 Chain,然后在 Argilla 中追踪初始提示和最终响应。Copy

from langchain_classic.chains import LLMChain

from langchain_core.callbacks.stdout import StdOutCallbackHandler

from langchain_core.prompts import PromptTemplate

from langchain_openai import OpenAI

argilla_callback = ArgillaCallbackHandler(

dataset_name="langchain-dataset",

api_url=os.environ["ARGILLA_API_URL"],

api_key=os.environ["ARGILLA_API_KEY"],

)

callbacks = [StdOutCallbackHandler(), argilla_callback]

llm = OpenAI(temperature=0.9, callbacks=callbacks)

template = """You are a playwright. Given the title of play, it is your job to write a synopsis for that title.

Title: {title}

Playwright: This is a synopsis for the above play:"""

prompt_template = PromptTemplate(input_variables=["title"], template=template)

synopsis_chain = LLMChain(llm=llm, prompt=prompt_template, callbacks=callbacks)

test_prompts = [{"title": "Documentary about Bigfoot in Paris"}]

synopsis_chain.apply(test_prompts)

Copy

> Entering new LLMChain chain...

Prompt after formatting:

You are a playwright. Given the title of play, it is your job to write a synopsis for that title.

Title: Documentary about Bigfoot in Paris

Playwright: This is a synopsis for the above play:

> Finished chain.

Copy

[{'text': "\n\nDocumentary about Bigfoot in Paris focuses on the story of a documentary filmmaker and their search for evidence of the legendary Bigfoot creature in the city of Paris. The play follows the filmmaker as they explore the city, meeting people from all walks of life who have had encounters with the mysterious creature. Through their conversations, the filmmaker unravels the story of Bigfoot and finds out the truth about the creature's presence in Paris. As the story progresses, the filmmaker learns more and more about the mysterious creature, as well as the different perspectives of the people living in the city, and what they think of the creature. In the end, the filmmaker's findings lead them to some surprising and heartwarming conclusions about the creature's existence and the importance it holds in the lives of the people in Paris."}]

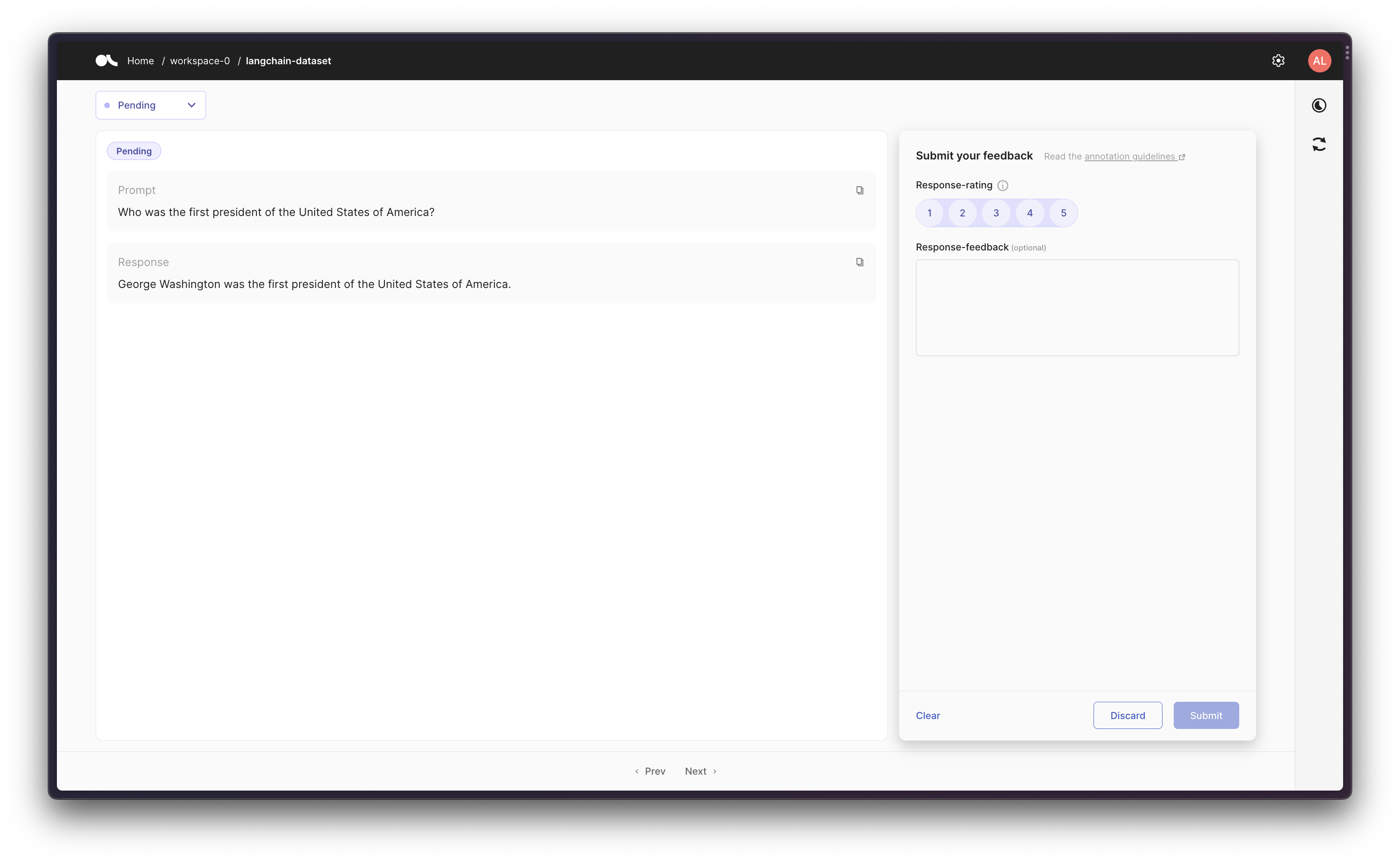

场景 3:使用带工具的 Agent

最后,作为更高级的用法,您可以创建一个使用工具的 Agent。ArgillaCallbackHandler 会追踪输入和输出,但不会追踪中间步骤/思考过程——对于给定的提示,我们记录原始提示和最终响应。

注意:本场景将使用 Google 搜索 API(Serp API),因此您需要安装google-search-results(pip install google-search-results),并设置 Serp API Key(os.environ["SERPAPI_API_KEY"] = "...",可在 serpapi.com/dashboard 找到),否则以下示例将无法运行。

Copy

from langchain.agents import create_agent, load_tools

from langchain_core.callbacks.stdout import StdOutCallbackHandler

from langchain_openai import OpenAI

argilla_callback = ArgillaCallbackHandler(

dataset_name="langchain-dataset",

api_url=os.environ["ARGILLA_API_URL"],

api_key=os.environ["ARGILLA_API_KEY"],

)

callbacks = [StdOutCallbackHandler(), argilla_callback]

llm = OpenAI(temperature=0.9, callbacks=callbacks)

tools = load_tools(["serpapi"], llm=llm, callbacks=callbacks)

agent = create_agent(

model=llm,

tools=tools,

callbacks=callbacks,

)

agent.invoke("Who was the first president of the United States of America?")

Copy

> Entering new AgentExecutor chain...

I need to answer a historical question

Action: Search

Action Input: "who was the first president of the United States of America"

Observation: George Washington

Thought: George Washington was the first president

Final Answer: George Washington was the first president of the United States of America.

> Finished chain.

Copy

'George Washington was the first president of the United States of America.'

通过 MCP 将这些文档连接 到 Claude、VSCode 等,获取实时答案。